These are verifiable facts: you can build a histogram of file sizes for your node yourself, and compare it with the sector size, and do the same mental experiment.

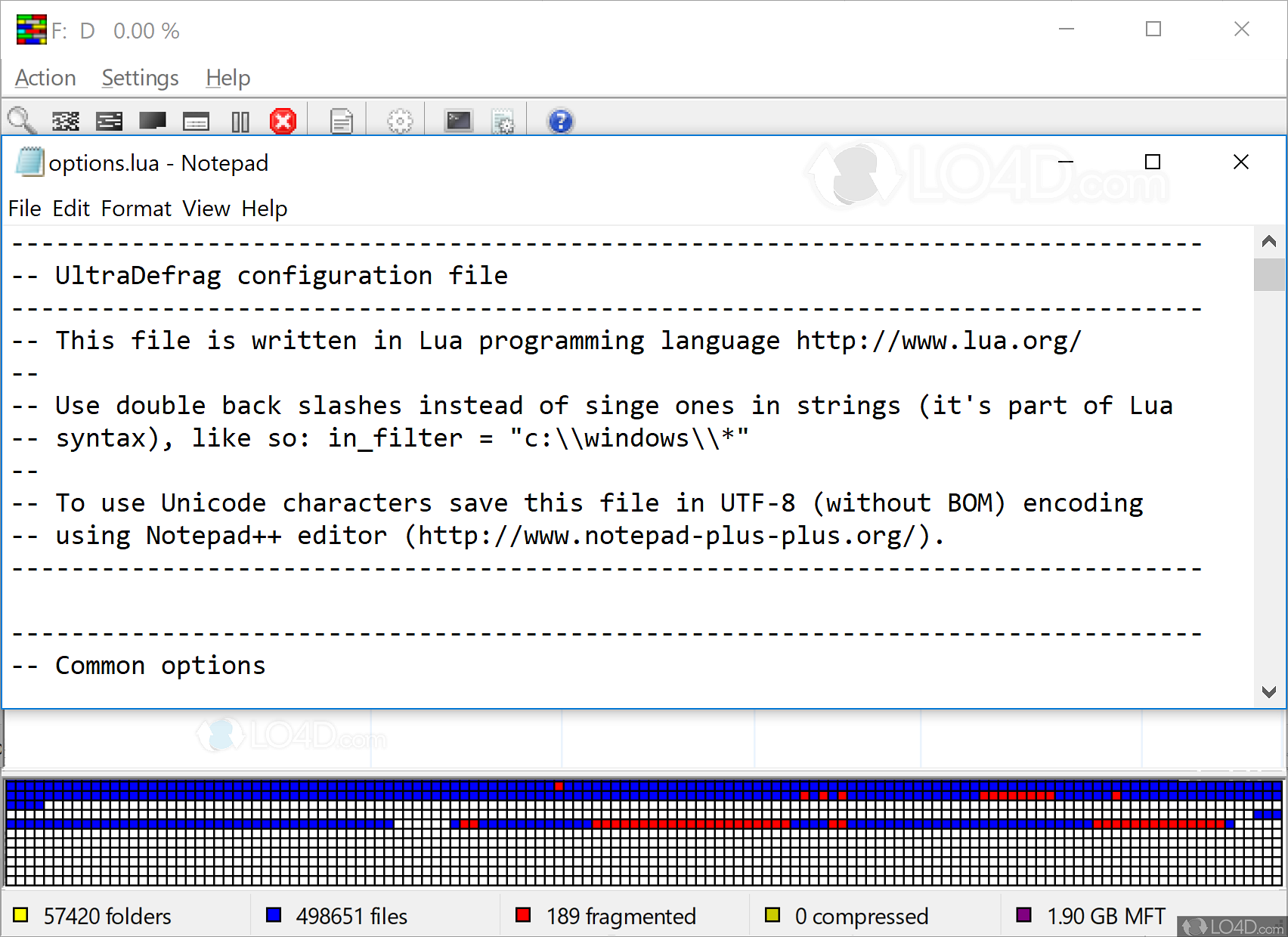

At the same time, probability that those files will get accesses is therefore just as tiny, so whatever small benefit could be gained – likely won’t be. Hence, defragmenting the disk may have a small positive effect (you still have metadata seek, and only eliminate mid-read seek) on few thousand of files, and have no effect on many million of smaller files.

Random access, driven by customer requests, is a bottleneck here, in addition to the database usual file locking and updates. Moreover, with the node operation, sequential read is never a bottleneck – node does so little of it it’s not even worth the bytes this message consists of. they cannot get fragmented by definition, and the rest are smaller than 16k provided that file allocation size quantizes by the sector size, and you have sufficient free space, only fraction of that fraction ends up fragmented. This latter point is irrelevant for storj: vast majority of files are smaller than 4k – i.e.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed